Saif Mahmud

Ph.D. Candidate · Cornell University · SciFi Lab

Advised by Prof. Cheng Zhang

Committee: Prof. Tanzeem Choudhury, Prof. Deborah Estrin

I build wearable systems that combine acoustic sensing, inertial sensors (IMU), and egocentric vision with machine learning to understand fine-grained human behavior, from full-body pose tracking to everyday activity recognition, on devices like smartglasses, smartwatches, rings, and clothing.

News & Updates

- May 2026 new role Started as a Research Intern on the founding team at BehaviorAI in NYC. We are building state-of-the-art wearable systems to understand fine-grained human behavior.

- Apr 2026 service Serving as a Technical Program Committee member at UbiComp / ISWC 2026.

- Nov 2025 milestone Passed my Admission to Candidacy (A Exam). Now officially a Ph.D. Candidate.

- Oct 2024 award "EchoGuide" wins Best Paper Honorable Mention at UbiComp / ISWC 2024.

- Sep 2024 paper "ActSonic", "Ring-a-Pose", and "SonicID" accepted at IMWUT / UbiComp 2025.

- Jul 2024 paper "MunchSonic" and "EchoGuide" accepted at UbiComp / ISWC 2024.

- Jul 2024 paper "SeamPose" accepted at UIST 2024.

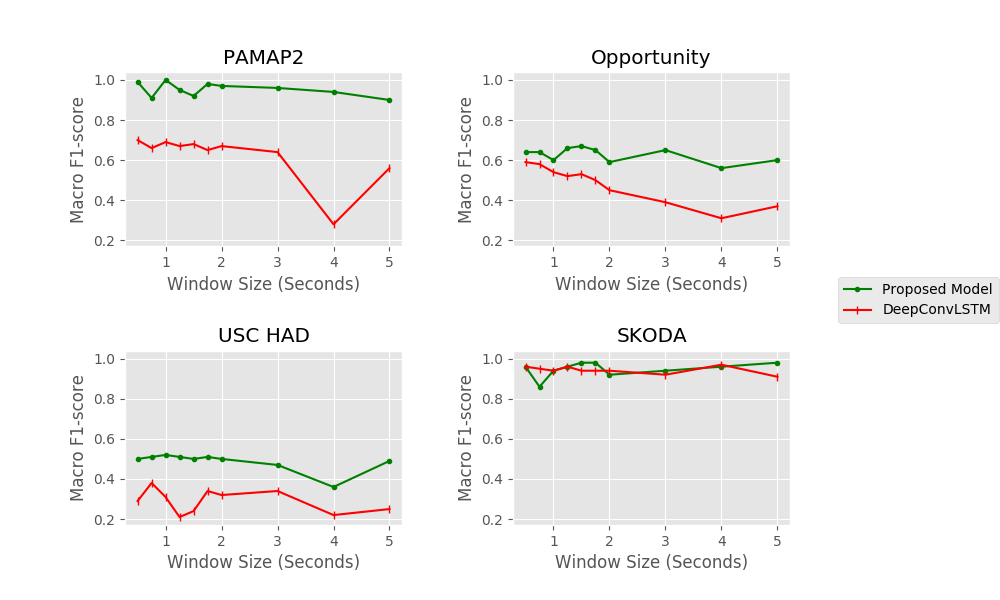

- Jan 2024 paper "STLGRU" accepted at PAKDD 2024.

- Nov 2023 paper "GazeTrak" accepted at MobiCom 2024.

- Jul 2023 paper "PoseSonic" accepted at IMWUT / UbiComp 2023.

SEE OLDER UPDATES

- Aug 2022milestone Started my Ph.D. at Cornell University.

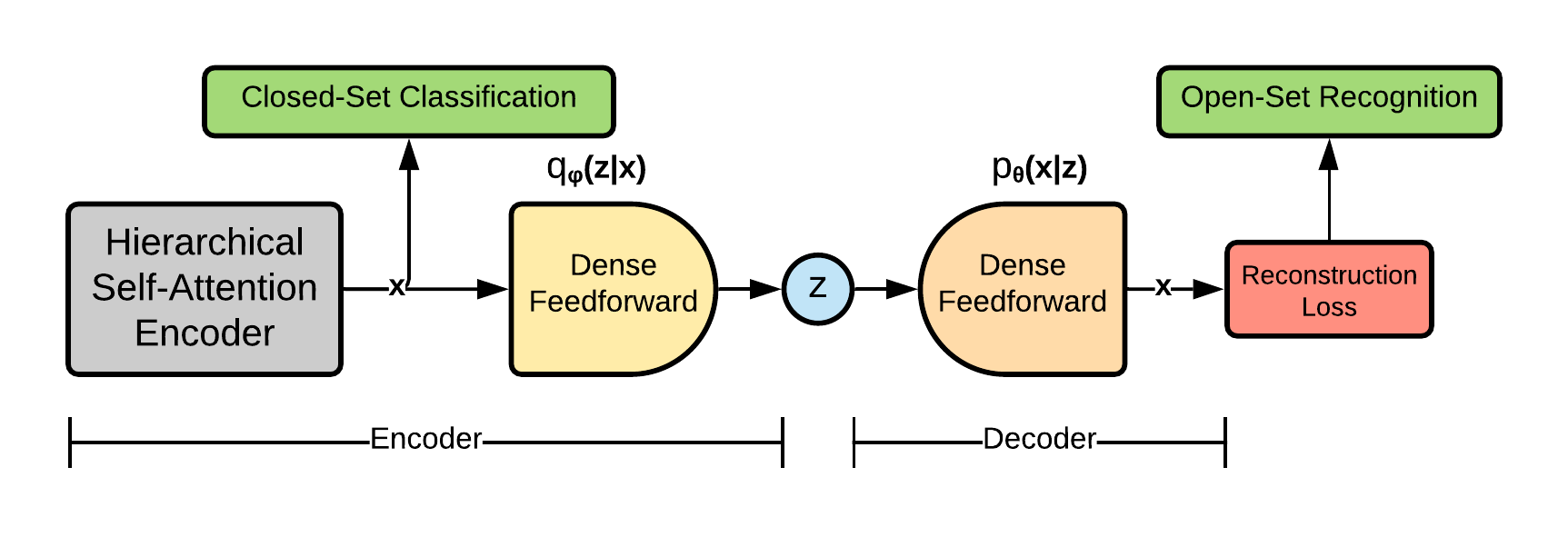

- Feb 2021paper "Hierarchical Self Attention Based Autoencoder" accepted at PAKDD 2021.

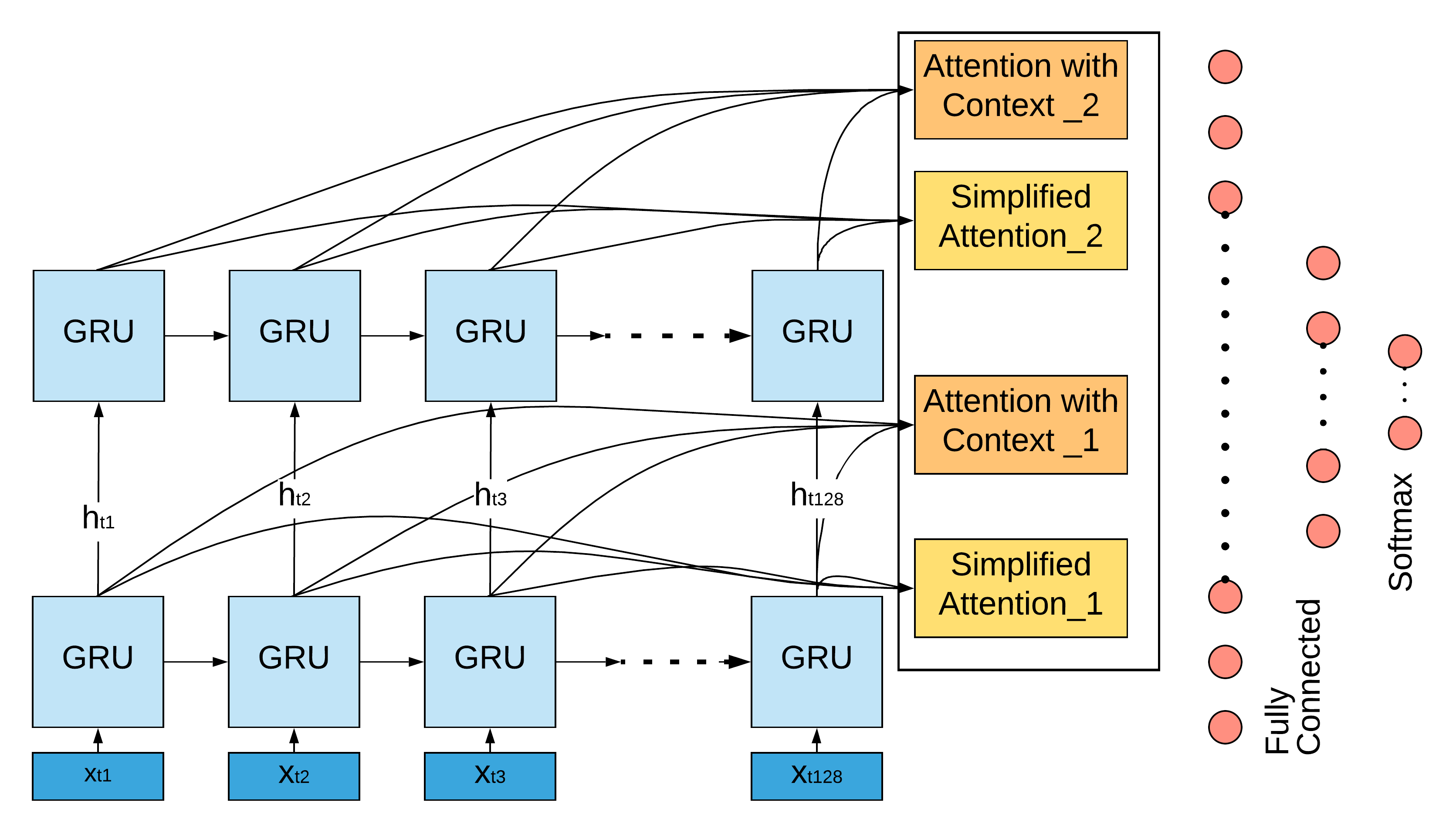

- Jan 2020paper "Human Activity Recognition Using Self-Attention" accepted at ECAI 2020.

Research

Publications

Acoustic Sensing on Smart Glasses

7 papersActive sonar on glasses for fine-grained, camera-free sensing of the body, face, and everyday behaviors. From recognizing daily activities and dietary actions to tracking 3D pose and gaze.

SonicGlasses: Smart Glasses Platform for Multi-Task Human Activity Tracking with Acoustic Sensing

Preprint · Under Review

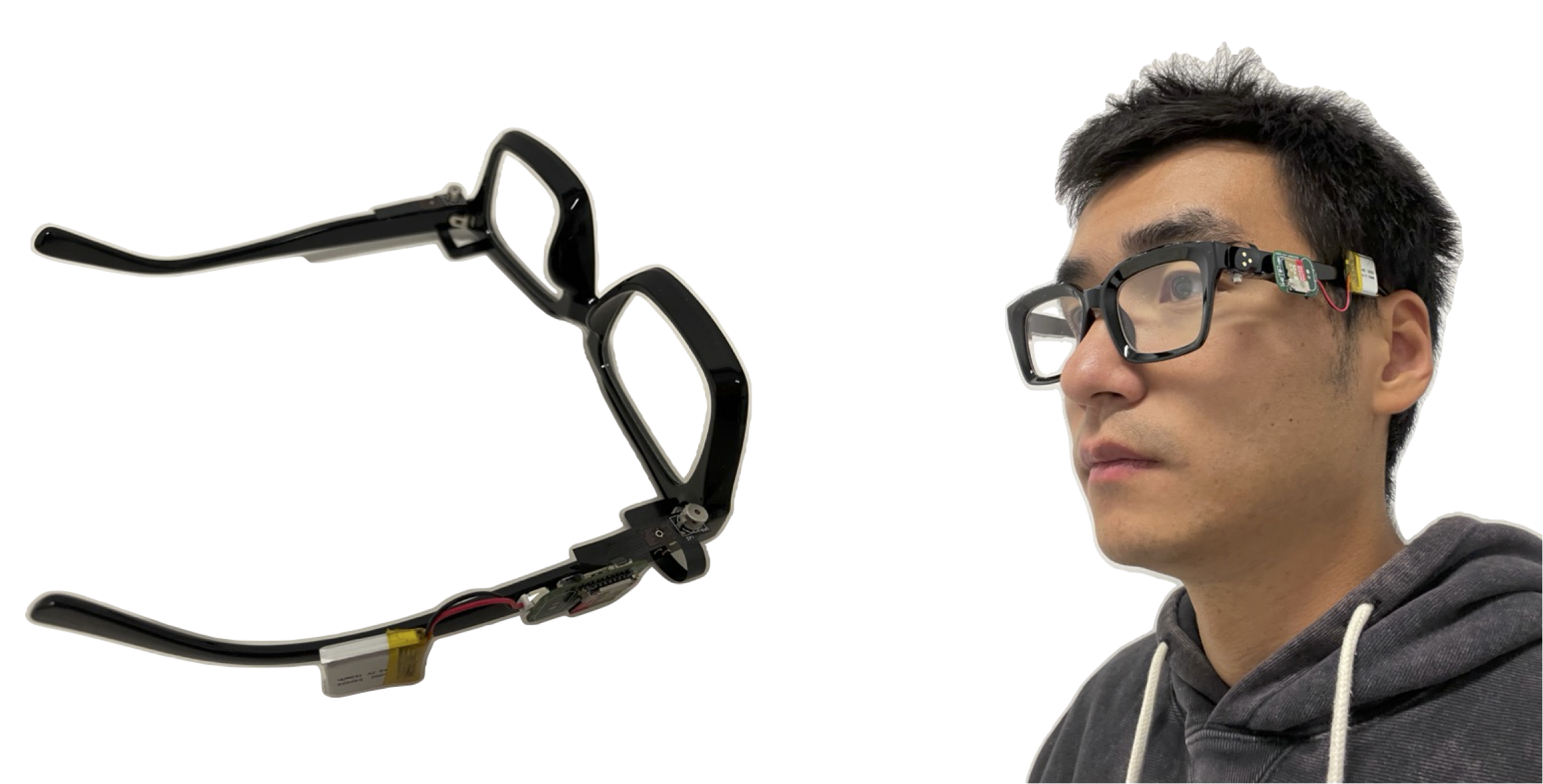

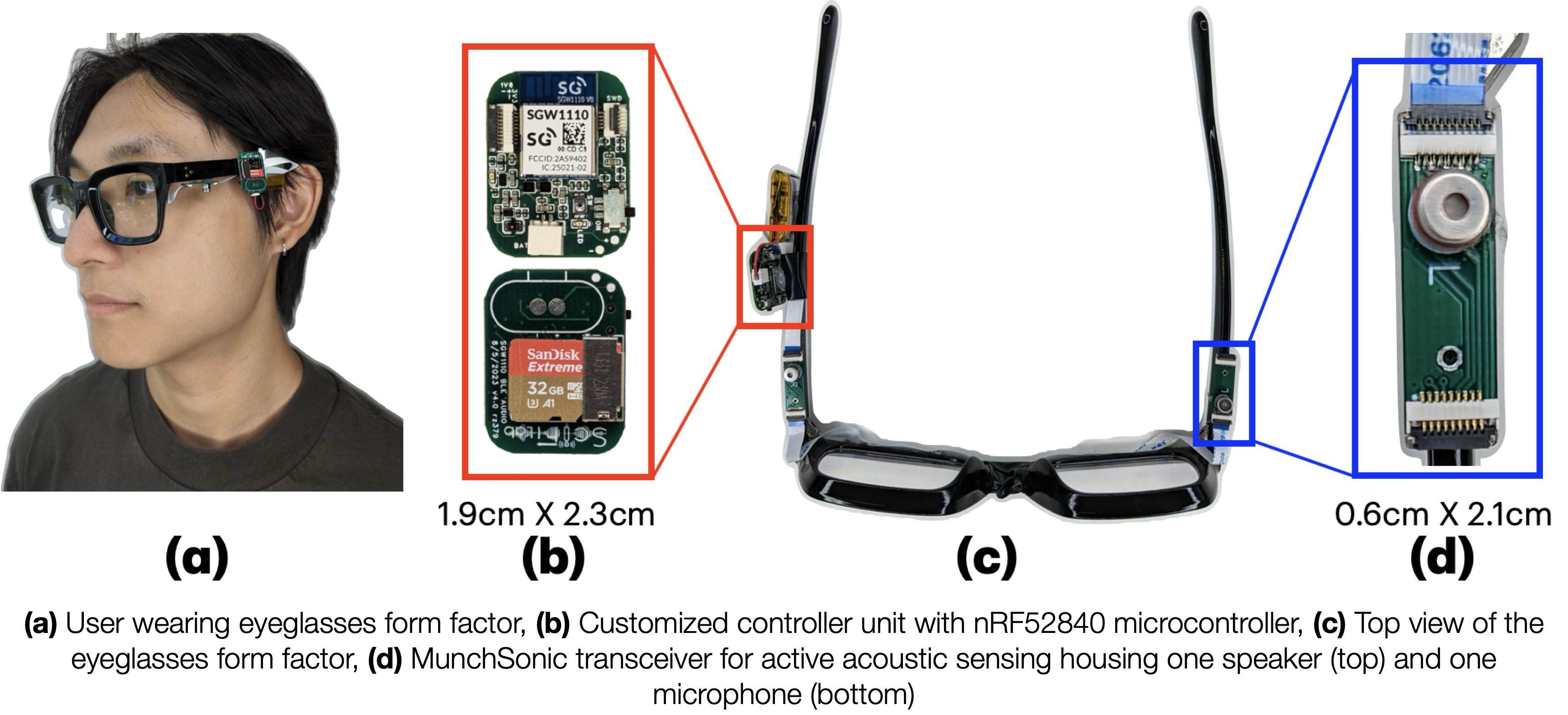

MunchSonic: Tracking Fine-grained Dietary Actions through Active Acoustic Sensing on Eyeglasses

UbiComp / ISWC 2024

EchoGuide: Active Acoustic Guidance for LLM-Based Eating Event Analysis from Egocentric Videos

UbiComp / ISWC 2024

Wearable Sensing on Smartwatches, Rings & Clothing

4 papersExtending acoustic, inertial, and capacitive sensing beyond glasses, into commercial smartwatches, rings, and clothing, to enable full-body pose tracking and continuous hand pose recognition on devices people already wear.

EchoMotion: Towards Full-Body Pose Tracking with a Single Smartwatch Using Active Acoustic Sensing

Preprint · Under Review

SonicFit: Cross-User Full-Body Pose Tracking for Fitness Exercises Using Active Acoustic and Inertial Sensing with a Single Commercial Smartwatch

Preprint · Under Review

Machine Learning for Sensor Data & Activity Recognition

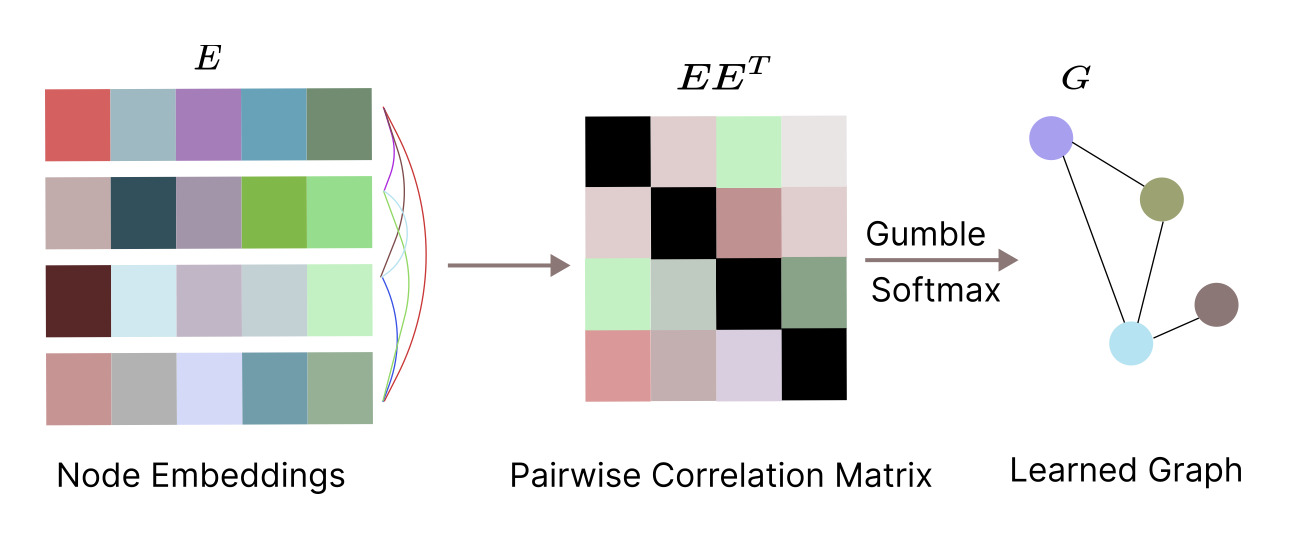

4 papersSelf-attention, autoencoder, and graph-based sequence models for human activity recognition and spatio-temporal prediction tasks on wearable and IoT sensor data.

Patents

Wearable Devices with Wireless Transmitter-Receiver Pairs for Acoustic Sensing of User Characteristics →

Press & Media

In the press

Glasses use sonar, AI to interpret upper body poses in 3D →

These sonar-equipped glasses could pave the way for better VR body tracking →

Spec-tacular Body Pose Estimation →

Smart glasses could boost privacy by swapping cameras for this 100-year-old technology →

Show 12 more

Glasses use sonar, AI to interpret upper body poses in 3D →

PoseSonic: Sonar, AI Powers Glasses to Track Upper Body Movements in 3D →

Glasses use sonar, AI to interpret upper body poses in 3D →

New sonar-equipped glasses use AI to interpret upper body poses in 3D →

Naočale sa sonarom za bolje praćenje tijela →

AI-powered 'sonar' on smartglasses tracks gaze and facial expressions →

No-Camera Eye Tracking: Cornell Invents Tech To Track Gaze Minus Surveillance →

Smart glasses use sonar to work out where you're looking →

Odd-looking glasses track your eyes and facial expressions without cameras →

AI-powered 'sonar' on smartglasses tracks gaze, facial expressions →

AI-Powered Sonar on Smartglasses Promises to Monitor Gaze and Facial Expressions →

Service

- TPC ACM UbiComp / ISWC 2026

- Reviewer ACM IMWUT / UbiComp · 2023, 2024, 2025, 2026 🏆 Outstanding Review (2023)

- Reviewer ACM CHI · 2024, 2025, 2026

- Reviewer ACM UIST · 2025, 2026

- Reviewer ACM UbiComp / ISWC · 2023

- Reviewer ACM Transactions on Internet of Things · 2023

Contact

Email: sm2446@cornell.edu

Office: 239 Gates Hall [map],

Cornell University, 107 Hoy Rd, Ithaca, NY 14853, USA